You don't need to be a developer to automate your mobile app testing. Not in 2026.

For years, automated testing was gated behind programming skills. If you wanted to automate a login flow, you needed to write Python or Java, configure Appium, learn XPath, and debug flaky selectors. If your job title was "Manual QA Tester" or "Product Manager" or "QA Lead without a coding background", automation was something your engineering team did not something you could touch.

That's changed. A new generation of no-code testing tools has made it possible for anyone who can describe a user flow in plain language to automate it. No scripts. No selectors. No environment variables.

This guide walks you through exactly how to automate mobile app testing without coding what's possible, how it works, the different approaches available, and a complete step-by-step walkthrough using Drizz's Vision AI platform, with links to the official documentation so you can follow along.

If you're new to mobile testing in general, our Best Mobile Test Automation Frameworks (2026) guide provides the broader landscape.

Key Takeaways

- No-code mobile testing lets QA testers, PMs, and non-developers create and maintain automated test suites without writing scripts.

- Three approaches dominate the space: record-and-replay, visual flow builders, and plain English / Vision AI.

- Record-and-replay tools are easiest to start but break frequently and create heavy maintenance burdens.

- Visual flow builders offer more control but still depend on element selectors under the surface.

- Plain English + Vision AI (Drizz) is the most resilient approach tests describe what you see on screen, and the AI identifies elements visually without selectors. Read our deep dive on how Vision Language Models power this technology.

- Drizz consists of two components: Drizz Desktop for local test creation and validation, and Drizz Cloud for scaled execution, reporting, and CI/CD integration.

Why Automation Felt Impossible (Until Now)

Traditional mobile test automation was built by developers, for developers. A typical Appium test requires:

- A programming language Java, Python, JavaScript, or Ruby

- A test framework JUnit, pytest, Mocha, or similar

- An automation server Appium, installed via npm, configured with environment variables

- Platform SDKs - Android SDK, Xcode, JDK

- Element locators XPath, accessibility IDs, resource IDs copied from Appium Inspector

- Synchronization logic explicit waits to handle loading states, animations, and async behavior

For an experienced developer, this takes half a day to set up and weeks to become productive with. For someone without coding experience, it's a wall.

This meant that in most organizations, automation was bottlenecked by engineering capacity. Manual testers who often have the deepest product knowledge and the sharpest eye for UX issues couldn't contribute to the automation suite. Their expertise stayed locked in spreadsheets and manual test runs.

No-code tools remove that wall. If you know your app well enough to describe what a user does ("tap Login, enter email, tap Submit, verify dashboard"), you can automate it.

The Three Approaches to No-Code Mobile Testing

Not all no-code tools work the same way. Understanding the differences helps you pick the right one.

1. Record and Replay

How it works: You interact with your app on a device or emulator while the tool records your actions taps, swipes, text input. It converts those actions into a replayable test script.

Examples: Katalon Recorder, Ranorex, some features of BrowserStack and Perfecto.

Pros:

- Fastest way to create your first test - literally just use the app

- No learning curve for the initial recording

- Good for quick smoke tests and demos

Cons:

- Extremely fragile. Recordings capture exact coordinates, element positions, and timing. Any UI change breaks the recording.

- Hard to maintain. When your app updates, you re-record from scratch rather than editing a specific step.

- Limited logic. Conditional flows, data-driven testing, and dynamic content handling are difficult or impossible.

- The "easy to create, impossible to maintain" trap: teams build 50 recorded tests, then spend all their time re-recording them.

Best for: Quick one-off validations and proof-of-concept demos. Not for production regression suites.

2. Visual Flow Builders

How it works: You build tests using a drag-and-drop interface or visual editor. Each step is a block "Tap element," "Enter text," "Assert visible" that you configure by selecting elements from the screen.

Examples: ACCELQ, Leapwork, Sofy, TestGrid.

Pros:

- More structured than record-and-replay tests are editable at the step level

- Reusable components and modular test design

- Some tools include AI-powered element healing that adapts when selectors change

- Better suited for regression suites than raw recordings

Cons:

- Still depends on element identifiers under the surface. The visual builder is a UI layer on top of selectors - when elements change significantly, tests still break.

- Learning curve for the platform's specific UI and workflow

- Vendor lock-in: your tests live inside the tool's proprietary format

- Enterprise pricing can be steep for teams just getting started

Best for: Mid-size QA teams with some technical depth who want a structured but low-code approach.

3. Plain English + Vision AI

How it works: You write test steps in plain English - "tap the Login button," "type user@example.com into the email field," "verify the dashboard is visible." The AI identifies elements visually on the rendered screen, the same way a human looks at a phone.

Example: Drizz.

Pros:

- Truly no-code - if you can describe a user flow, you can automate it

- No element selectors, no XPath, no accessibility IDs required

- Tests survive UI changes because they reference what's visible on screen, not internal element structures

- Works on release builds - test the actual app your users download

- Cross-platform - same test works on Android and iOS (Supported Platforms)

- Near-zero maintenance - the Vision AI adapts to visual changes automatically

Cons:

- Newer category smaller ecosystem than established record-and-replay tools

- For apps with minimal text and many similar-looking icons, visual identification has less to differentiate

- Less granular device-level control than coded frameworks for specialized use cases (see Drizz Usage Expectations for details on what Drizz handles)

Best for: Teams where non-developers need to create and maintain tests, UIs change frequently, and long-term maintenance cost matters more than initial setup speed.

Understanding Drizz: Two Components, One Platform

Before diving into the walkthrough, it's helpful to understand how Drizz is structured. The Product Components documentation explains the full architecture, but here's the summary:

Step-by-Step: Automating Your First Test Without Code

Here's a practical walkthrough using Drizz. We'll automate a login flow - the most common first test for any mobile app. Each step references the relevant documentation page so you can go deeper.

Step 1: Set Up Drizz Desktop (5 minutes)

- Download Drizz Desktop from drizz.dev/start

- Connect your device USB (real device), Android emulator, or iOS simulator. Drizz surfaces platform and state details automatically. See Supported Platforms for the full list of supported device types.

- Upload your app build (APK or IPA)

That's it. No Node.js. No JDK. No SDK configuration. No environment variables. The Drizz Desktop App documentation covers the complete setup process.

Step 2: Understand the Command System

Drizz tests are built from structured commands - each step describes one user action or verification. The full list is available in the Commands Reference, but the most common ones for getting started are:

- Tap Tap on an element identified by its visible text or description

- Type / Enter Text - Input text into a field

- Verify / Assert Check that something is visible on screen

- Swipe / Scroll - Navigate through scrollable content

- Wait Pause for a specific condition or duration

- Launch App Start or restart the application

Commands support conditional logic and reusable modules for more complex scenarios. See What You Can Automate for the full scope of supported interactions.

Step 3: Write Your Test Plan

A Test Plan in Drizz is an ordered sequence of commands that describes a user flow. Open a new test plan and describe the login flow:

Each step describes exactly what a user would do and see. The Vision AI engine interprets the rendered screen to find and interact with the described elements.

Step 4: Run the Test Locally

Click Run in Drizz Desktop. The Vision AI will:

- Launch your app on the connected device

- Look at the screen and find the "Login" button visually

- Tap it

- Find the email field by visual context, type the text

- Find the password field, type the text

- Find the "Sign In" button, tap it

- Verify "Welcome" text appears on screen

- Verify the dashboard screen loaded

You can watch each step execute in real time on the device. Drizz provides immediate visibility into execution flow, outcomes, and on-device behavior.

Step 5: Review Results and Debug Failures

When a test passes, you see step-by-step results with screenshots showing exactly what happened at each step.

When a step fails, Drizz generates AI-based failure reasoning explaining what was expected, what was observed, and why execution failed. Visual highlights and device logs are included automatically. This is covered in detail in the Common Issues documentation.

No digging through raw logs. The failure explanation tells you whether the issue is a real bug or a test configuration problem.

Step 6: Scale to Your Full Test Suite

Once your login test works, build out your critical flows:

- Onboarding / sign-up

- Search and browse

- Add to cart / checkout

- Profile editing

- Settings and permissions

- Push notification handling

- Multi-app journeys (deep links, OTP flows)

The Different Use Cases Supported by Drizz documentation covers the full range of scenarios you can automate, including multi-app workflows, API validation integrated into UI flows, and variable network conditions.

For test authoring best practices naming conventions, modular structure, reusable flows, and conditional logic see the Best Practices guide.

Step 7: Move to CI/CD with Drizz Cloud

Once your tests are validated locally, move them to Drizz Cloud for automated execution in your CI/CD pipeline.

The CI/CD Platform Integration documentation covers setup for:

- GitHub Actions trigger test runs on every PR or push

- Jenkins integrate with existing Jenkins pipelines

- Bitrise native mobile CI integration

- GitLab CI, Azure DevOps and other platforms via Drizz's API

For API-based integration, the Drizz API Integration docs walk through the full lifecycle:

- Authentication secure token-based access

- Upload push app builds programmatically

- Trigger Run execute test plans via API

- Error Codes handle responses and failures

Cloud devices are provisioned fresh for every run, ensuring no residual state impacts results. Parallel execution distributes test plans across available device slots automatically.

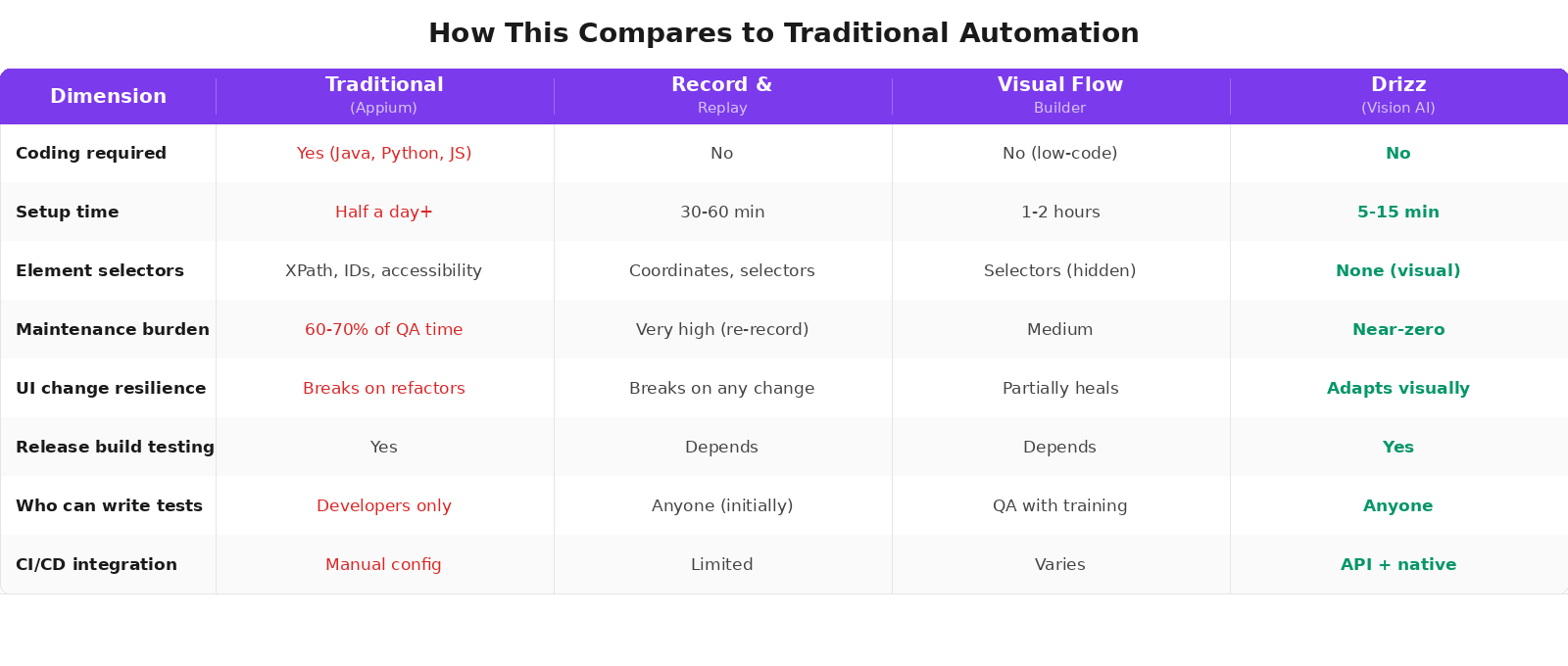

How This Compares to Traditional Automation

Common Concerns (And Honest Answers)

"Can no-code testing handle complex scenarios?"

It depends on the approach. Record-and-replay tools struggle with anything beyond linear flows. Visual flow builders handle moderate complexity. Drizz supports conditional logic, reusable modules, and multi-step branching - enough for the vast majority of E2E regression scenarios. The Drizz documentation covers the full scope of what you can automate, including multi-app journeys, API calls integrated into UI flows, and handling dynamic pop-ups and overlays.

For extremely specialized use cases (biometric testing, sensor data, low-level OS APIs), coded frameworks still offer deeper control. The Drizz documentation is transparent about what Drizz handles and what falls outside its scope.

"Will my tests be as reliable as coded tests?"

Vision AI tests are typically more reliable than coded tests at scale because they don't depend on selectors that break with every UI change. Drizz reports 97%+ test accuracy in production and 95%+ test stability, compared to 70-80% for typical Appium suites. The maintenance difference compounds over time - coded suites get flakier as they grow; visual suites stay stable.

Is no-code testing a precursor to 'real' automation?

It can be, but it doesn't have to be. Some teams use no-code as an entry point and later add coded tests for specialized scenarios. Others use Drizz as their primary automation platform indefinitely because the maintenance math favors it at any scale. The choice depends on your team's needs, not on a hierarchy of "real" vs "not real" automation.

"What about CI/CD integration?"

Drizz integrates natively with GitHub Actions, Jenkins, Bitrise, GitLab CI, and Azure DevOps. Tests run automatically on every build, PR, or scheduled interval. The Drizz documentation provides setup guides for each CI/CD platform, and the API integration docs allow fully programmatic control over uploads, test triggers, and result retrieval.

"Can I version-control my tests?"

Yes. Drizz test files are simple text-based instructions that commit cleanly into Git repositories. Engineers can branch, diff, and review test logic just like application code. This is a significant advantage over visual flow builders where tests live in proprietary formats.

"What happens when a test fails?"

Drizz provides AI-based failure reasoning for every failure explaining what was expected, what was observed, and why execution failed. Step-level screenshots, visual highlights, and device logs are included automatically. For Cloud runs, execution metadata, logs, and audit trails are preserved in a structured format for traceability across releases. See the Drizz documentation for debugging guidance.

Who This Is For

This approach works best for:

- Manual QA testers who want to automate without learning Python or Java

- QA leads who need to scale automation without hiring more developers

- Product managers who want to define and validate test scenarios using product language

- Startup teams where one person wears multiple hats and can't spend weeks learning Appium

- Enterprise QA teams where the 60% maintenance tax of selector-based automation has become unsustainable

- Flutter, React Native, and cross-platform teams where traditional selector-based tools are structurally more fragile due to custom rendering engines

If any of these describe your situation, you can have your first automated test running in under 15 minutes.

Drizz Documentation Reference

For quick access to the docs referenced throughout this guide:

Getting Started

- Download Drizz Desktop from drizz.dev/start

- Connect your device USB, emulator, or simulator

- Upload your app no SDK changes, no code modifications

- Write your first test in plain English using the Commands Reference

- Run it locally and review results with AI-powered failure reasoning

- Move to CI/CD using the CI/CD Integration guide

Your 20 most critical test cases can be automated in a day without writing a single line of code.

FAQ

Do I need any technical background to use Drizz?

No. If you can describe what a user does in your app ("tap Login, enter email, tap Submit"), you can write automated tests. The Core Concepts documentation explains the foundational ideas in plain language. Familiarity with your app's user flows is more important than any technical skill.

Can no-code tests run on real devices?

Yes. Drizz supports real devices (via USB), Android emulators, and iOS simulators. Drizz Cloud provides additional real device infrastructure with clean provisioning per run for parallel execution at scale.

How do no-code tests handle app updates?

This is where approach matters. Record-and-replay tests usually break on any update. Visual flow builders partially self-heal. Drizz's Vision AI adapts automatically because it identifies elements visually - if the button still says "Login" on screen, the test still works regardless of what changed under the hood. Tests that repair themselves is a core capability of the platform.

Can I use Drizz alongside coded frameworks?

Absolutely. Many teams use Drizz for broad regression coverage (written by QA testers and PMs) alongside Detox or Espresso for unit-level UI tests (written by developers). The two approaches complement each other no-code handles breadth, coded handles depth. See our Detox vs Appium vs Drizz comparison for how teams layer these approaches.

What types of mobile apps can be tested?

Drizz supports native Android, native iOS, React Native, Flutter, hybrid (WebView), and mobile web apps. See Supported Platforms for the complete list. Because Vision AI identifies elements on the rendered screen rather than through framework-specific APIs, it works regardless of how your app is built.

Where can I find the full documentation?

The complete Drizz documentation is available at docs.drizz.dev. Start with the Overview and work through the Getting Started section.