Why Mobile CI Pipelines Produce Flaky Test Failures

Mobile CI pipelines often fail for reasons unrelated to product defects. UI rendering delays, locator drift, and inconsistent device environments generate intermittent failures that pollute build signals. Teams re-run pipelines, triage false alarms, and lose confidence in regression suites.

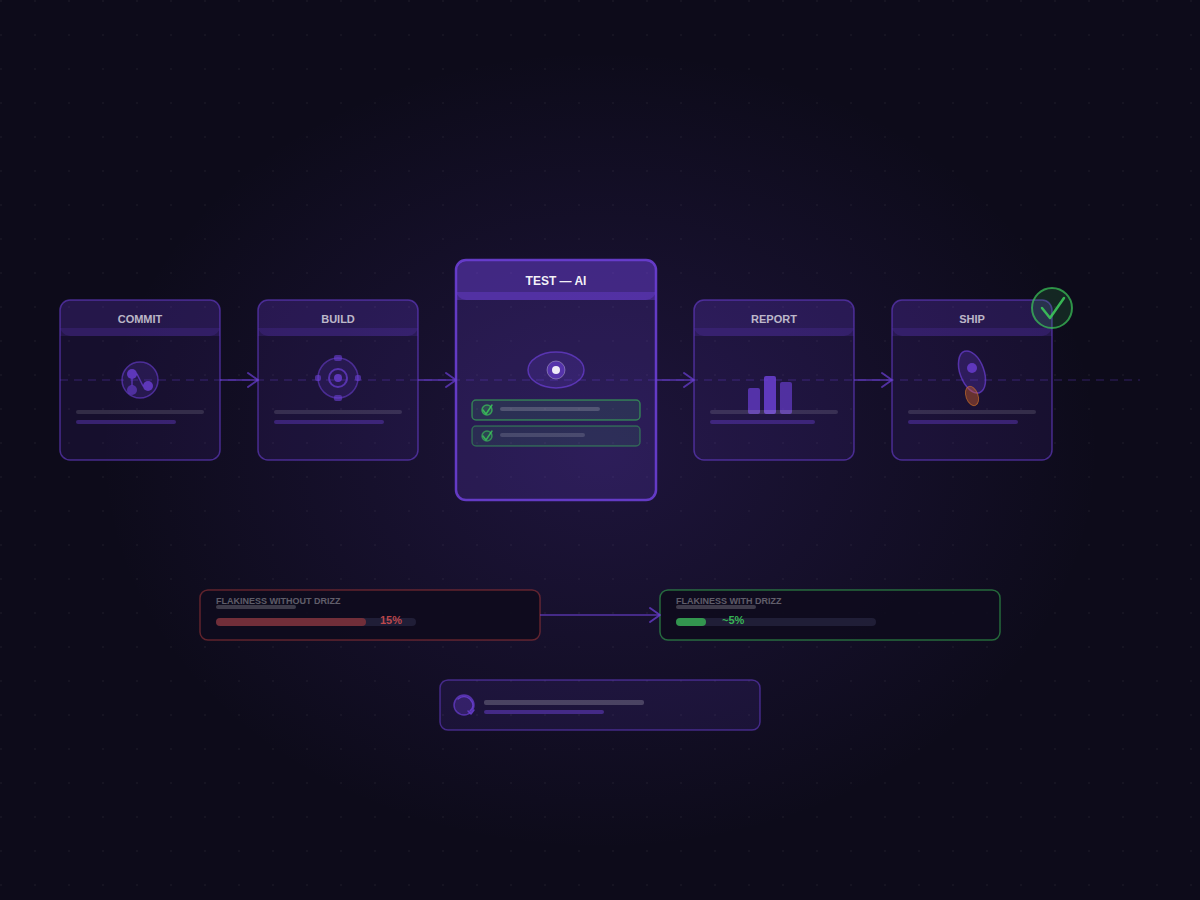

Drizz addresses this problem directly with a vision-driven mobile testing platform designed to reduce CI noise caused by unstable UI tests. The system combines Vision AI execution, deterministic device environments, automatic retries, and artifact-rich debugging so that CI results reflect actual product behavior rather than infrastructure instability.

The result is measurable signal improvement across mobile regression pipelines.

Reported automation metrics include:

- ~5% flakiness compared with ~15% typical in locator-based automation frameworks

- 97%+ execution success rate across CI runs

- ~80% reduction in testing time for regression suites

- ~20% overall sprint time saved through automated test workflows

Learn about React Native flaky test reduction in CI.

Vision-Based Automation That Eliminates Locator Drift

Traditional mobile automation frameworks depend on element locators such as XPath, accessibility IDs, or CSS selectors. Even small UI updates can invalidate these identifiers, causing tests to fail even when the application behaves correctly.

Drizz runs UI automation through a Vision AI engine that interprets the screen visually. Instead of relying on selectors, the engine recognizes UI elements through layout, visual context, and screen structure.

Because the interaction layer is visual, tests remain stable when:

- UI element identifiers change

- components move within the layout

- the accessibility tree shifts

- app versions introduce minor UI redesigns

This architecture avoids one of the largest causes of CI noise in mobile pipelines: locator drift.

The system also includes self-healing execution. When a UI element shifts or appears in a different location, the engine identifies the intended interaction and updates the step dynamically so the test continues without breaking.

Filtering Transient Failures with Automatic Retry Logic

Transient failures are common in mobile pipelines. UI rendering delays, animation timing differences, and temporary device latency can cause tests to fail once and pass immediately afterward.

Drizz includes built-in retry logic at the test plan level.

When a test fails, the platform automatically re-executes the test one additional time. The retry allows CI pipelines to distinguish between deterministic defects and transient instability.

This behavior helps teams identify:

- consistent product bugs

- timing-related UI failures

- environment-level anomalies

By filtering intermittent failures automatically, the retry mechanism improves the signal-to-noise ratio of CI runs.

Adaptive Waits That Stabilize Mobile UI Execution

Many flaky UI tests originate from fixed delays written into automation scripts. Hardcoded waits assume the UI will load within a specific timeframe, but real devices rarely behave deterministically.

Drizz removes static timers entirely.

The execution engine detects the expected UI state before proceeding to the next step. Instead of waiting an arbitrary number of seconds, the platform monitors screen state until the correct element appears.

This adaptive synchronization stabilizes flows where:

- screens render asynchronously

- network calls introduce variable delays

- animations block user interaction temporarily

By aligning execution timing with real UI readiness, Drizz eliminates a common source of timing-based flakiness.

Deterministic Device Environments for CI Execution

Unstable device environments can create CI failures even when tests are written correctly. Residual application state, corrupted installations, and background processes frequently cause inconsistent results.

Drizz Cloud provisions a fresh device environment for every run.

Each execution begins with:

- a clean device instance

- a fresh application installation

- isolated runtime environments

- reset device state between runs

The system supports execution across:

- Android real devices

- Android emulators

- iOS simulators

- mobile web environments

Devices are discovered through ADB and Xcode runtimes, enabling unified orchestration across device types and operating systems.

Read more about automated mobile testing platforms for iOS and Android

Parallel Device Execution That Shortens Regression Cycles

Regression test suites often grow large enough that sequential execution becomes impractical for CI pipelines.

Drizz Cloud distributes tests across device pools and runs them concurrently.

Parallel execution capabilities include:

- concurrent device slots across the cloud infrastructure

- cross-device regression execution

- distributed test plan orchestration

- isolated device allocation per run

Each device runs independently with its own environment, ensuring no shared state or cross-test interference. This allows teams to scale regression suites without increasing pipeline instability.

Read more about full regression automation for mobile apps

Artifact-Rich Observability for Debugging Mobile CI Failures

Mobile test flows often contain repeated actions such as tapping navigation elements or typing common values.

Drizz Vision AI caches previously validated UI interactions so repeated actions execute faster in subsequent steps. This reduces recognition overhead and accelerates test execution.

Repeated interactions can run up to 3× faster due to cached recognition of UI elements and flows.

Observability That Explains Why Tests Fail

When a mobile test fails in CI, engineers typically need to reconstruct the failure manually. Traditional logs rarely contain enough context to diagnose the problem quickly.

Drizz produces detailed artifacts for every execution step:

- step-level screenshots

- structured execution logs

- timestamps for each action

- execution timelines

- run summaries and failure summaries

Failures include AI-generated reasoning that explains:

- what the system observed on the screen

- what the expected state was

- why the step could not proceed

The report structure allows engineers to trace failures directly to the UI state that caused them, reducing debugging time significantly.

Read more about full test artifacts for automated mobile runs

CI/CD Integration Across Modern DevOps Pipelines

Drizz integrates directly into existing CI infrastructure through API-driven workflows.

Supported CI platforms include:

- GitHub Actions

- Jenkins

- GitLab CI

- Bitbucket Pipelines

- Azure DevOps

CI pipelines can:

- upload application binaries

- trigger test plan execution

- retrieve structured results

- collect logs and screenshots

Typical CI workflows include:

- pull request smoke tests

- nightly regression suites

- release gate validation

- feature branch validation

All execution artifacts remain accessible through unique run IDs, enabling traceability across builds and releases.

Framework-Free Test Authoring With Plain English Automation

Drizz does not require traditional frameworks such as Appium or XCUITest.

Tests are written as plain English instructions interpreted by the Vision AI engine. Because automation is framework-agnostic, teams avoid:

- selector maintenance

- platform-specific code duplication

- separate test suites for Android and iOS

A single test suite can run across Android, iOS simulators, and mobile web environments.

This approach increases authoring speed. Teams report:

- ~200 automated tests authored per QA engineer per month

- ~10× faster authoring compared with traditional Appium workflows

Mobile CI Pipelines With Reliable Failure Signals

Mobile automation should provide reliable signals about product quality. When pipelines fail frequently for non-product reasons, engineers lose trust in test results.

Drizz improves CI signal reliability through:

- Vision AI UI interaction

- automatic retry logic

- adaptive synchronization

- deterministic device environments

- parallel device orchestration

- artifact-rich debugging

Across regression pipelines, these mechanisms reduce intermittent failures and produce CI results that more accurately reflect real product behavior.

For teams running large mobile regression suites, reducing CI noise is often the difference between a pipeline engineers trust and one they ignore.