You've upgraded every layer of your mobile stack except the one that breaks the most.

Let me start with a confession: I've spent the last 6 months interviewing mobile engineers about their tooling. Flutter devs at fintech unicorns. React Native teams at Series B startups. Android leads at companies you've definitely used this week.

The pattern was the same almost every time teams running a cutting-edge 2024/2025 stack for everything from frontend to analytics, but still stuck with 2012-era testing tools held together by brittle selectors and Thread.sleep().

This isn't a listicle. This is an audit of what elite mobile teams are actually using in 2026, with adoption stats, market data, and the uncomfortable truth about why your testing layer is probably the weakest link in an otherwise modern stack.

If you're shipping mobile apps and still wrestling with flaky Appium tests, broken selectors, and QA bottlenecks keep reading.

The 8-Layer Modern Mobile Stack: A Research-Backed Breakdown

Before we talk about the testing gap, let's establish what "modern" actually looks like across every layer. I pulled adoption data from Stack Overflow's 2025 Developer Survey, Statista, and conversations with 40+ mobile engineers.

Layer 1: Frontend Framework

The UI toolkit your users actually interact with determines how your app looks, feels, and performs across platforms.

The Winners: Flutter (46% market share) and React Native (35%)

The cross-platform war is effectively over. Flutter won on developer experience; React Native won on ecosystem familiarity. The rest (Kotlin Multiplatform at 9%, native-only) are niche plays.

The breakdown:

- Flutter: Google Pay, BMW, Alibaba, Toyota. 170K GitHub stars. Impeller rendering engine delivers 60-120 FPS. Dart's learning curve is the only friction.

- React Native: Instagram, Coinbase, Shopify. 120K GitHub stars. New Architecture (Fabric renderer) finally closed the performance gap. JavaScript ecosystem means faster hiring.

Layer 2: Backend-as-a-Service (BaaS)

The server-side engine that handles your database, authentication, storage, and real-time sync so your team can focus on the app, not infrastructure.

The Winners: Firebase (still dominant for speed) and Supabase (the open-source insurgent)

Supabase hit a $2 billion valuation in April 2025 and now serves 1.7 million+ developers. 40% of recent Y Combinator batches build on Supabase. That's not a trend that's a generational shift.

The breakdown:

- Firebase: Battle-tested real-time sync. Tight Google Cloud integration. Pay-per-read pricing can spike unpredictably.

- Supabase: PostgreSQL foundation (55% of developers use Postgres in 2025). Predictable tiered pricing. Row-level security baked in. Open-source escape hatch.

Layer 3: CI/CD Pipeline

The automated assembly line that builds, tests, and ships your code every time you push turning commits into production releases without manual intervention.

The Winners: GitHub Actions (dominant for most), Bitrise (mobile-specialized)

72% of agile teams now use automated test frameworks in CI/CD pipelines. GitHub Actions leads personal projects and is open-source; Jenkins and GitLab CI/CD persist in enterprise (legacy inertia).

The breakdown:

- GitHub Actions: Lives where your code lives. 60%+ faster deployment frequencies when paired with proper test automation. Works for 80% of mobile teams.

- Bitrise: Purpose-built for mobile. Pre-warmed macOS VMs, automatic Xcode updates, native code signing. Teams report 28% faster builds on average vs. GitHub Hosted Runners.

Layer 4: Product Analytics

The insight layer that tracks how users actually behave in your app what they tap, where they drop off, and which features drive retention.

The Winners: Mixpanel ($170M revenue in 2024), Amplitude ($312M revenue)

The product analytics market is projected to hit $45 billion by 2033. Every serious mobile team tracks events, funnels, and retention. This is table stakes now.

The breakdown:

- Mixpanel: Easier setup, faster time-to-insight. 8,000+ businesses, trillions of events processed. Best for product managers who want answers without SQL.

- Amplitude: Deeper analytics, better for experimentation. Data governance tools for enterprise. Steeper learning curve.

Layer 5: Crash Reporting & Monitoring

The early warning system that catches crashes, errors, and performance issues in production before your users flood the app store reviews.

The Winners: Sentry (1.3M+ users), Firebase Crashlytics (free tier advantage)

Mobile apps with 99.9%+ crash-free sessions see 4x higher retention. Crash reporting isn't optional, it's survival.

The breakdown:

- Sentry: Cross-platform (web, mobile, backend) visibility. AI-powered root cause analysis (94.5% accuracy). Open-source option available.

- Crashlytics: Free, deeply integrated with Firebase. Good enough for most teams. Limited to mobile.

Layer 6: Feature Flags & Experimentation

The control panel that lets you toggle features on and off remotely, run A/B tests, and roll out changes to 1% of users before going to 100%.

The Winners: LaunchDarkly, Statsig, Amplitude Experiment

Progressive rollouts and A/B testing are standard practice. 60%+ of engineering teams use feature flags to manage risk.

Layer 7: Push Notifications & Engagement

The re-engagement channel that brings users back into your app with timely, personalized messages from transactional alerts to marketing nudges.

The Winners: OneSignal, Firebase Cloud Messaging, Braze

This layer is commoditized. Pick based on your existing stack integration.

Layer 8: Testing

The quality gate that's supposed to catch bugs before your users do validating that every screen, flow, and edge case works as expected across devices.

The Gap: This is where most stacks fall apart.

The Testing Gap: Why Teams Use Modern Tools Everywhere Except Here

Here's the uncomfortable stat: 22% of teams still use Appium as their primary mobile testing framework. Selenium leads web testing at 64.2%. These tools were created in 2011-2012.

Meanwhile:

- Flutter was released in 2017

- React Native's New Architecture shipped in 2024

- GitHub Actions didn't exist until 2019

- Supabase was founded in 2020

Your testing layer is a decade older than every other layer in your stack.

What "Modern Everywhere Except Testing" Actually Looks Like

Here's the typical pattern: a team deploys Flutter apps through GitHub Actions to Firebase App Distribution. Analytics run on Mixpanel. The backend is Supabase. Everything modern, everything maintained.

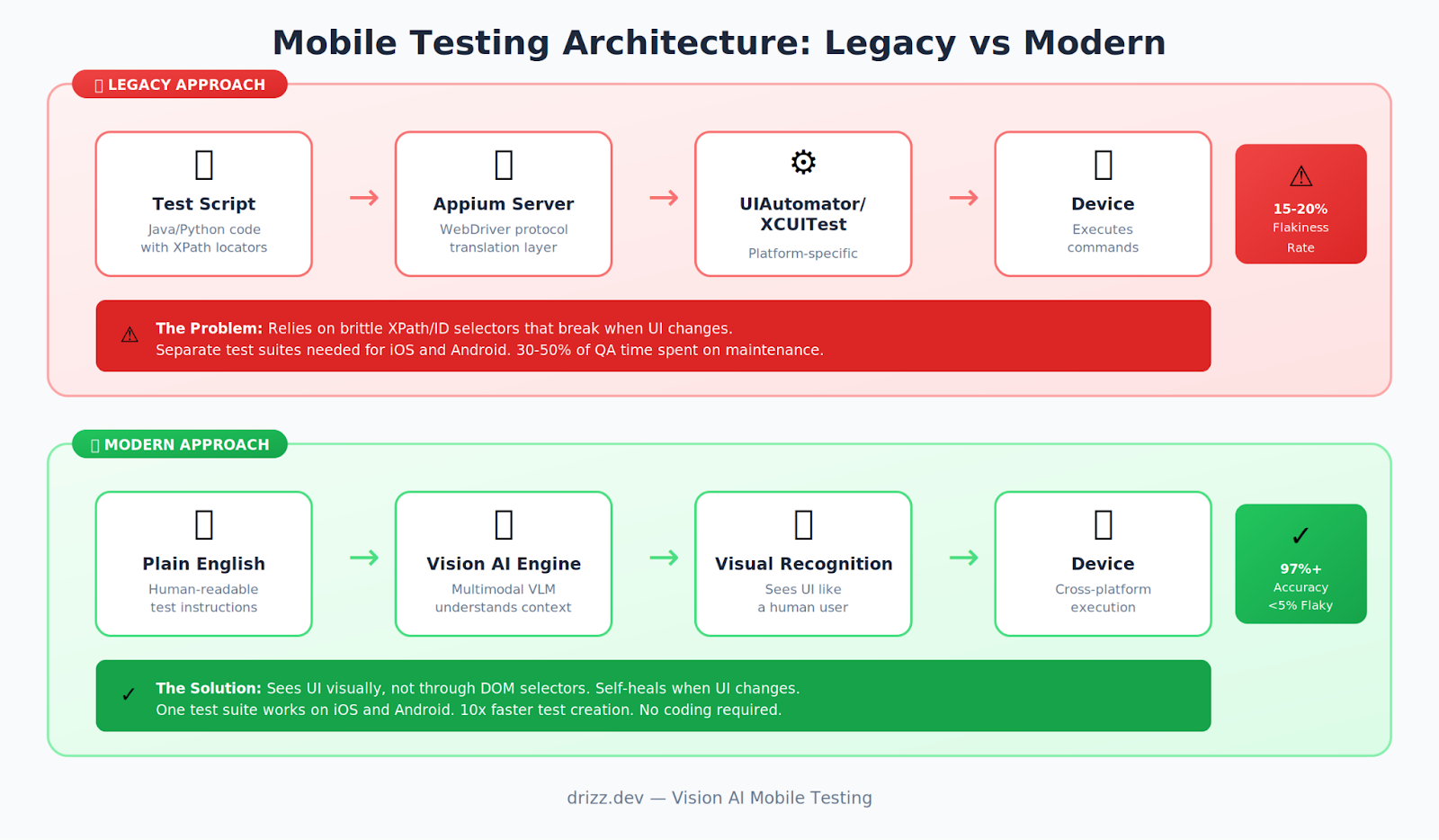

Then QA kicks in. Appium tests break every time the design team changes a button's padding. 30% of the sprint gets eaten by test maintenance not catching actual bugs, but fixing selectors. Flakiness rates sit at 15-20%, sometimes spiking to 25% on real devices. The tests find more false positives than real issues.

Teams running Espresso for Android and XCUITest for iOS face a different flavor of the same problem: two completely separate test suites for the same user flows. Write it twice, maintain it twice, debug it twice. One mobile lead estimated $200K/year in engineering time just on test maintenance mostly fixing selectors that broke because someone changed an accessibility label.

And then there are the teams that just gave up. The testing experience is so painful that engineers stop writing tests altogether, falling back to manual QA for critical flows. It's a bottleneck, but at least manual testing doesn't serve up false failures every morning.

The Numbers Behind the Gap

Source: State of Test Automation Survey 2024, TestGuild research, developer interviews

Modern vs. Legacy Testing: A Side-by-Side Comparison

Let's make this concrete. Here's what testing the same flow looks like across approaches:

The Flow: "User searches for a restaurant, adds item to cart, completes checkout"

Legacy Stack (Appium + Java)

// Brittle selectors, verbose waits — and this is just the first 3 steps

public void testCheckoutFlow() {

WebDriverWait wait = new WebDriverWait(driver, Duration.ofSeconds(30));

MobileElement searchBox = (MobileElement) wait.until(

ExpectedConditions.presenceOfElementLocated(

By.xpath("//android.widget.EditText[@resource-id='com.app:id/search_input']")));

searchBox.sendKeys("Pizza Palace");

wait.until(ExpectedConditions.presenceOfElementLocated(

By.xpath("//android.widget.TextView[@text='Pizza Palace']"))).click();

wait.until(ExpectedConditions.presenceOfElementLocated(

By.xpath("//android.widget.Button[@resource-id='com.app:id/add_to_cart_btn']"))).click();

// ... 30+ more lines for cart, address, payment, confirmation

}

Now here's the same flow with Vision AI testing:

# 8 lines, human-readable, self-healing

Test: Complete checkout flow

Steps:

- Search for "Pizza Palace"

- Tap on the restaurant named "Pizza Palace"

- Add the first menu item to cart

- Go to cart and proceed to checkout

- Complete the order with saved payment

- Verify order confirmation appears

The difference shows up the moment something changes. Designer renames search_input to search_field? Appium breaks Vision AI still finds the search bar visually. A promotional banner shifts the layout? Appium throws a timing error Vision AI adapts and moves on. Need to run the same test on iOS? With Appium, you rewrite it from scratch. With Vision AI, the same plain-English test works on both platforms.

The Real Metrics Comparison

Why Vision AI + Plain English + Self-Healing Completes the 2026 Stack

Here's the architectural shift that matters: Vision AI testing doesn't rely on the DOM.

Traditional testing (Appium, Espresso, XCUITest) treats your app like a document structure. It finds elements by ID, class, XPath things that live in code.

Vision AI testing treats your app like a human sees it. It finds elements by how they look and what they're labeled. When your designer moves a button 10 pixels left, Vision AI doesn't care. When your Android and iOS apps have different underlying structures, Vision AI sees them as the same UI.

The Technical Architecture

Why This Matters for Your Stack

It's the missing piece.

Your Flutter/React Native app is cross-platform. Your Supabase backend is cross-platform. Your GitHub Actions CI/CD works for everything.

But Appium? Espresso? XCUITest? They're platform-specific, maintenance-heavy, and fundamentally incompatible with how fast modern mobile teams ship.

Vision AI testing finally gives you a testing layer that matches the rest of your 2026 stack:

- Cross-platform: One test suite for iOS and Android

- Low-code: Plain English means PMs and designers can contribute

- Self-healing: UI changes don't break tests

- CI/CD native: Integrates with GitHub Actions, Bitrise, etc.

- Framework agnostic: Works with Flutter, React Native, native, anything

Drizz: The Modern Testing Layer in Practice

Drizz was built by engineers from Amazon, Coinbase, and Gojek who lived through exactly the problems described above. The company raised $2.7M in seed funding (Stellaris Venture Partners, Shastra VC) specifically to solve the testing layer gap.

How It Works

Write tests in plain English:

Tap on "Sign In"

Enter "test@example.com" in the email field

Enter "password123" in the password field

Tap "Continue"

Verify "Welcome back" message appears

Vision AI executes the test:

- Captures screenshot

- Identifies UI elements visually (not by selectors)

- Performs actions as a human would

- Adapts to different screen sizes, densities, devices

Self-healing handles changes:

- Button moved? AI finds it by visual appearance

- Text changed slightly? AI uses semantic understanding

- Layout different on tablet? AI adapts

Debugging when tests fail:

- Step-by-step screenshots

- Detailed log intelligence

- Pinpoints actual bugs vs. test issues

The Results

From early deployments with global unicorns:

- 97%+ test accuracy (vs. 80-85% with typical Appium setups)

- 10x faster test creation (minutes vs. hours)

- <5% flakiness (vs. 15-20% industry average)

- 15 hours/week average user engagement (teams actually use it)

The ROI Math: What This Actually Saves

Let's do the calculation for a typical mobile team (3 engineers, 1 QA, shipping bi-weekly):

Current State (Appium/Traditional)

With Modern Testing (Vision AI)

Plus the intangibles:

- Faster release cycles (testing isn't the bottleneck)

- Higher confidence in releases (fewer production bugs)

- Better developer experience (less frustration)

- Non-engineers can contribute tests (expand coverage)

Getting Started: The Quick Stack Upgrade

If you're convinced your testing layer needs modernization, you don't need a quarter-long migration plan. Here's how to start:

1. Audit (1 hour): Check your flakiness rate (failures ÷ total runs) and count how many tests broke last month from UI changes not actual bugs. If either number makes you wince, keep going.

2. Pilot (1 afternoon): Pick your top 3-5 critical user flows (login, checkout, onboarding). Recreate them in a Vision AI tool like Drizz using plain English. Run them alongside your existing suite.

3. Compare & Decide: Look at execution time, flakiness, and how long each suite took to set up. The numbers usually make the decision obvious.

The Bottom Line

Here's the uncomfortable truth: You've already modernized 7 of 8 layers in your mobile stack.

Flutter or React Native for cross-platform UI. Firebase or Supabase for backend. GitHub Actions or Bitrise for CI/CD. Mixpanel for analytics. Sentry for monitoring.

Modern, maintained, and actually pleasant to use.

Then there's testing. Still relying on tools built in 2011-2012. Still maintaining separate test suites per platform. Still losing engineering hours to flaky selectors and brittle XPaths.

The testing layer is the last piece of the puzzle.

The good news? The category is finally catching up. Vision AI-based testing tools Drizz, Applitools, and others are bringing the same cross-platform, low-maintenance philosophy to testing that Flutter brought to frontend development. The approach is different (visual understanding instead of DOM selectors), but the outcome is the same: less busywork, more confidence, faster shipping.

A modern 2026 mobile stack looks something like this:

The testing gap is real, but it's solvable. Whether you start with a pilot on your top 5 user flows or do a full audit of your current flakiness rate, the important thing is to stop accepting a 2012-era testing experience as normal.

Your stack deserves better. So does your team.

Want to see what Vision AI testing looks like in practice? Drizz is a good place to start built specifically for the mobile testing gap we've been talking about.