XCUITest vs Appium: Choosing Between Two iOS Frameworks, and When Neither Solves the Real Problem

Most teams searching "XCUITest vs Appium" are trying to make one of three decisions: drop Appium for native iOS testing, figure out whether to split a cross-platform test suite, or find a way out of a flaky test situation that's slowing the whole engineering org down. This guide answers all three honestly, and introduces a fourth option that's worth knowing about if your problem is the third one.

What Each Framework Actually Is

Appium

Appium is an open-source, cross-platform mobile test automation framework built on the WebDriver protocol. It supports native, hybrid, and mobile web apps across iOS, Android, and Windows, and lets teams write tests in any WebDriver-compatible language: Java, Python, JavaScript, Ruby, C#, and more.

Architecturally, Appium runs as a Node.js server. Your test script sends commands to the server via a REST API, which translates them into platform-specific actions. On iOS, that translation layer uses Apple's XCUITest framework under the hood, specifically via a tool called WebDriverAgent (WDA), which acts as a bridge between the Appium server and the device. This is confirmed in Appium's own documentation: "This driver leverages Apple's XCUITest libraries under the hood in order to facilitate automation of your app."

This architecture is Appium's greatest strength and its most significant tradeoff. The cross-platform abstraction means one test codebase can run on both iOS and Android, but every command makes a round trip through the server, which adds latency and introduces potential failure points.

Appium's genuine strengths:

- Single test suite for iOS and Android

- Language flexibility, write in whatever your team knows

- Large, mature ecosystem with extensive community documentation

- Works with Selenium-experienced QA engineers without a steep learning curve

- Integrates with all major device clouds (BrowserStack, Sauce Labs, etc.)

- Supports native, hybrid, and mobile web apps

Appium's known tradeoffs:

- Slower test execution due to the client-server architecture

- More complex setup, the Appium server, WebDriverAgent, and Xcode dependencies all need to be configured correctly

- Higher flakiness than native frameworks, partly because of timing issues in the translation layer and partly because of selector instability

- Xcode version updates can temporarily break iOS automation until the community updates WDA

XCUITest

XCUITest is Apple's native UI testing framework, built into Xcode and available since iOS 9.3. Tests are written in Swift or Objective-C and run within the Xcode IDE. Unlike Appium, XCUITest operates without a server layer, commands interact directly with the app through Apple's own APIs.

This is why XCUITest is consistently faster and more stable for iOS testing than Appium. There's no translation layer, no server initialization, no network overhead. Tests run in-process, synchronized with the UI thread, so actions wait for the UI to be ready before proceeding. According to multiple practitioner sources, XCUITest can execute tests up to 50% faster than Appium-based approaches on iOS.

XCUITest's genuine strengths:

- Faster test execution, no server layer means lower latency

- More reliable and less flaky than Appium on iOS

- Simple setup, it's built into Xcode, no additional dependencies

- Deep integration with the Apple ecosystem — supports HealthKit, widgets, Live Activities, and other iOS-specific APIs

- Easier for iOS developers to adopt, same language (Swift/ObjC), same IDE

- Maintained by Apple, always updated alongside iOS releases

XCUITest's known tradeoffs:

- iOS only, no Android support

- Requires macOS and Xcode to run

- Tests written in Swift or Objective-C only, no language flexibility

- Teams building cross-platform apps need a separate test suite for Android

- Not well-suited for React Native or Flutter apps, where the native view hierarchy doesn't reflect the component structure

The Core Architectural Difference

The fundamental difference comes down to where each framework sits relative to the app:

XCUITest runs as a separate process but uses Apple's native APIs directly. It accesses the app through the accessibility layer, the same layer that powers screen readers. This gives it accurate element resolution and built-in synchronization.

Appium on iOS wraps XCUITest through WebDriverAgent. Your test script → Appium server → WebDriverAgent → XCUITest APIs → app. Each layer adds overhead and an additional failure surface.

One implication worth noting: since Appium uses XCUITest under the hood for iOS, some of XCUITest's underlying limitations, particularly around accessibility layer representation of cross-platform frameworks, flow through to Appium as well. You're not bypassing XCUITest's constraints when you use Appium on iOS; you're adding a layer on top of them.

Head-to-Head Comparison

The Decision Framework

Rather than "which is better," the right question is which constraint matters most to your team.

Choose XCUITest if:

- Your app is iOS-only and you're not planning Android

- Your team writes Swift or Objective-C and wants to stay in Xcode

- Test speed and stability are priorities and you're willing to accept iOS-only coverage

- You rely on deep Apple ecosystem APIs (HealthKit, WatchKit, Live Activities)

- You want the simplest possible setup with minimal external dependencies

Choose Appium if:

- You ship on both iOS and Android and want a single test codebase

- Your QA team comes from a web testing background and already knows WebDriver

- You need language flexibility across a polyglot engineering organization

- You're testing hybrid apps or mobile web alongside native

The React Native and Flutter caveat: Both frameworks are commonly cited as Appium use cases. In practice, neither Appium nor XCUITest works cleanly with React Native or Flutter because both frameworks render their own UI trees rather than native components. React Native's accessibility layer is inconsistent across platforms and versions. Flutter renders completely custom widgets. When you automate a React Native or Flutter app with XCUITest, you're interacting with a native accessibility representation that may not accurately reflect what the user sees. When you use Appium, you're doing the same thing with an additional translation layer on top. Neither framework was designed for this, and maintenance overhead reflects that.

The Shared Limitation: Selectors

Here's the thing that most XCUITest vs Appium comparisons don't say plainly: both frameworks are selector-based. They find UI elements through code identifiers, XCUITest uses accessibility identifiers and element type predicates, Appium uses XPath, accessibility IDs, and class chains. Both approaches bind your tests to the internal structure of your UI.

This works well when the UI is stable and the element hierarchy is predictable. It becomes a maintenance problem when:

- UI components are redesigned selectors break even when functionality hasn't changed

- Server-driven or dynamic content changes element structure between sessions

- A/B tests alter the UI conditionally, making element IDs inconsistent

- React Native or Flutter framework updates change how components are represented in the accessibility layer

- A team ships frequently, any release can invalidate dozens of selector dependencies

Teams commonly report spending 30–50% of their QA engineering time maintaining broken selectors rather than writing new test coverage. This isn't a flaw in Appium or XCUITest specifically, it's an architectural property of selector-based testing. The selector is a contract between your test and your UI. Every UI change is a potential contract breach.

When Both Frameworks Hit Their Ceiling

If selector maintenance has become the dominant overhead in your mobile QA process — if fixing broken tests takes more time than writing new ones — switching between Appium and XCUITest won't resolve it. The problem is the selector-based approach itself, not which framework is implementing it.

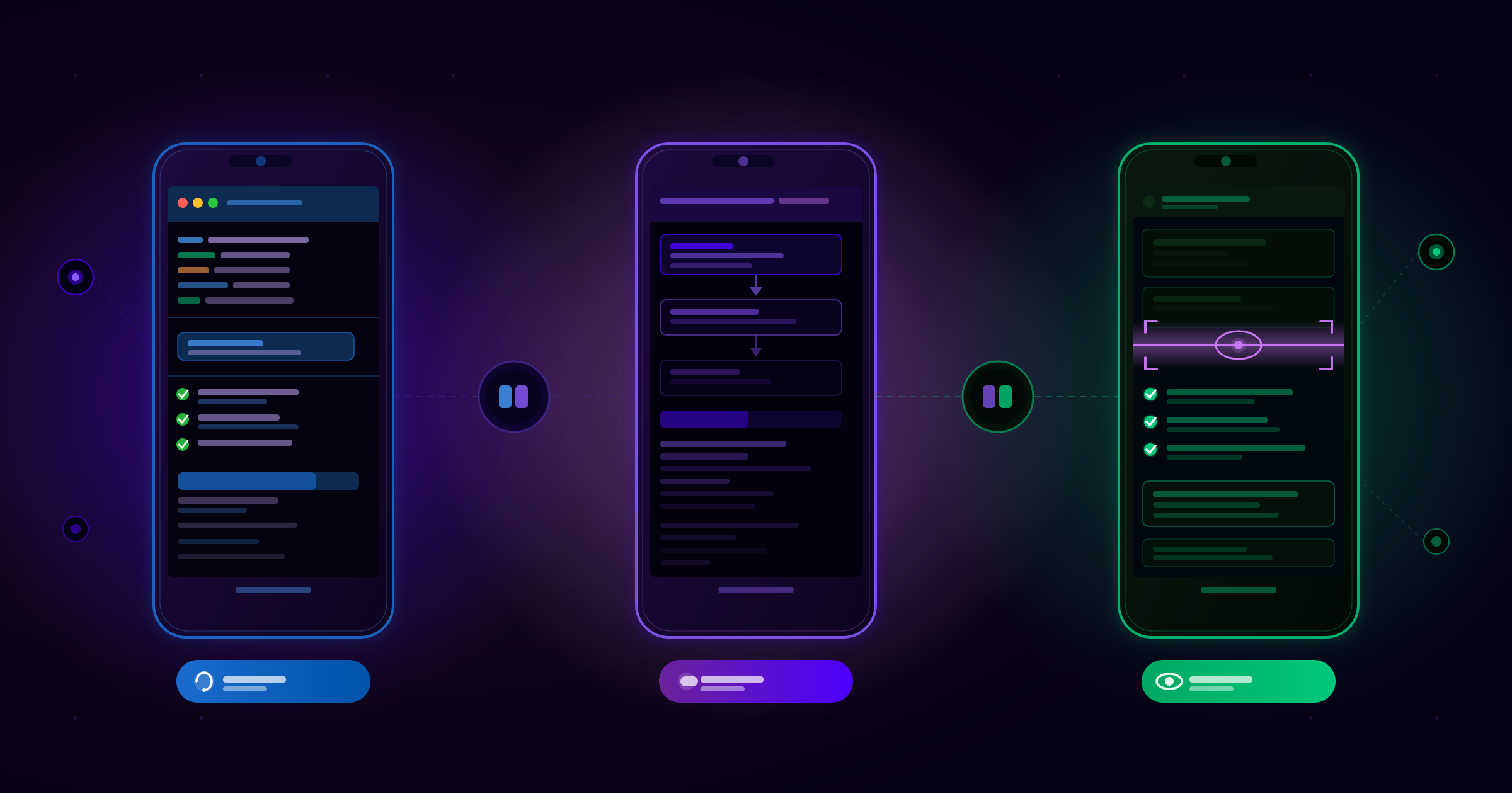

This is where a newer category of tooling has emerged: Vision AI-based mobile test automation, which replaces selectors with computer vision.

Instead of binding tests to element identifiers, Vision AI reads the screen visually — the way a human tester would — and executes test steps based on what it sees. When the UI changes, the AI adapts rather than failing. Tests are written in plain English rather than selector syntax.

Drizz is built on this model. It's a Vision AI mobile test automation platform that runs tests on real iOS and Android devices without selectors, accessibility IDs, or XPath. Founded in 2024 by engineers from Amazon, Coinbase, and Gojek, it was built specifically around the problem that selector-based automation creates at scale.

What makes it a meaningful alternative for teams at the XCUITest/Appium ceiling:

- Single test suite for both iOS and Android: Tests describe what the user does, not what element to find, so the same steps run on both platforms

- Self-healing execution: When elements shift or rename, Drizz identifies intent visually and adapts

- Plain English test authoring: Non-engineers can write and review tests without selector syntax

- Works with React Native and Flutter: Vision AI reads the screen regardless of what renders it, so framework-specific accessibility layer inconsistencies don't matter

- CI/CD integration via API: Plugs into GitHub Actions, Jenkins, GitLab, Azure DevOps

- Real device execution via Drizz Cloud across OS versions, screen sizes, and manufacturers

Drizz reports approximately 5% flakiness in production environments and 97%+ execution success in CI , figures the company cites from early customer deployments, compared against the 8–15% rates commonly reported with locator-based frameworks.

This isn't the right tool for every team. If your Appium or XCUITest suite is stable and well-maintained, there's no reason to switch. If you need deep Apple ecosystem API access that only XCUITest provides, Vision AI won't replace that. But for cross-platform mobile teams, particularly those on React Native or Flutter , where test maintenance has become the bottleneck rather than test writing, it addresses the root cause that framework comparisons don't.

Summary

XCUITest is the right choice when iOS-only coverage, execution speed, and tight Apple ecosystem integration are the priorities. Appium is the right choice when cross-platform coverage and a single test codebase matter more than raw performance. Both are mature, capable frameworks , the tradeoffs between them are architectural, not qualitative.

The comparison only breaks down when selector maintenance overhead becomes the dominant problem. At that point, the question shifts from "Appium or XCUITest" to "do we need a different approach to mobile automation altogether?"

For teams asking that second question, Drizz is worth evaluating. For everyone else, pick the framework that matches your app architecture and team constraints, and the answer above gives you everything you need to decide.