"Test failed. Element not found."

Sound familiar?

Your CI pipeline just went red. Slack is blowing up. The QA channel is flooded with the same question: "Is this a real bug or did something change?"

Spoiler: nothing broke. The checkout button still works perfectly. Users are converting just fine.

But your XPath selector was looking for btn-checkout-v1, and someone renamed it to cta-purchase-experiment-b.

Welcome to the world of dynamic UI testing where your app evolves faster than your test suite can keep up.

The old playbook says "write more robust selectors" or "implement self-healing." But here's the truth: you can't selector your way out of a fundamentally broken approach.

This is why Drizz's Vision AI exists. Not to write better selectors but to eliminate the need for them entirely.

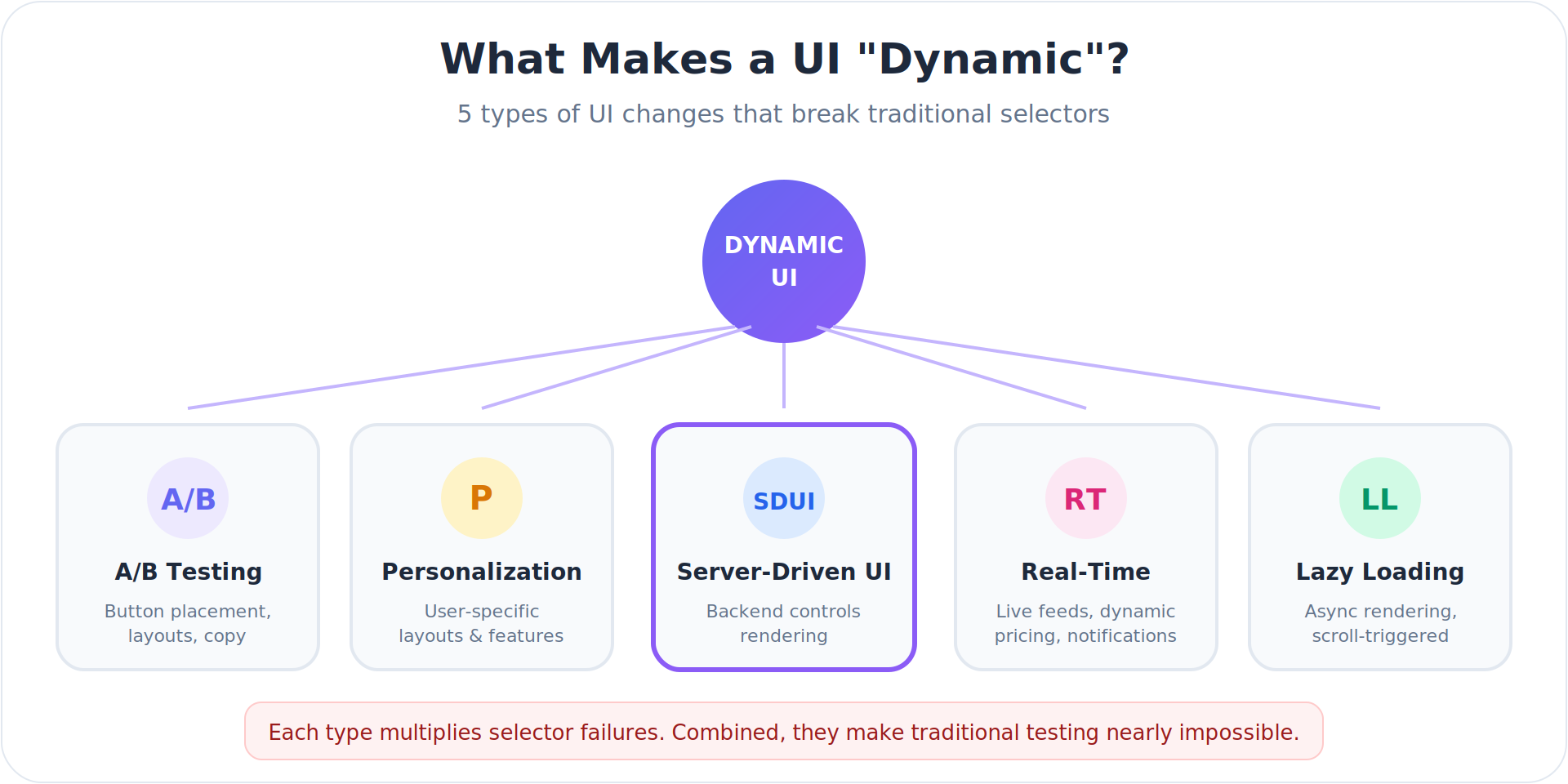

What Makes a UI "Dynamic"?

Before diving into solutions, let's define the problem. A dynamic UI is any interface where elements change without code deployment:

1. A/B Testing and Experimentation

Product teams constantly run experiments. Button placement, color schemes, copy variations, layout structures all of these can change between user sessions or even within the same session. Facebook, Amazon, and Google run thousands of simultaneous experiments across their apps.

For testing, this means a selector that works for Control Group A fails completely for Variant B.

2. Personalization Engines

Modern apps tailor experiences based on user behavior, demographics, location, and preferences. An e-commerce app might show different homepage layouts for first-time visitors versus returning customers. A banking app displays different feature sets based on account type.

Each personalized variation potentially breaks selectors written for a "default" view that doesn't exist for most users.

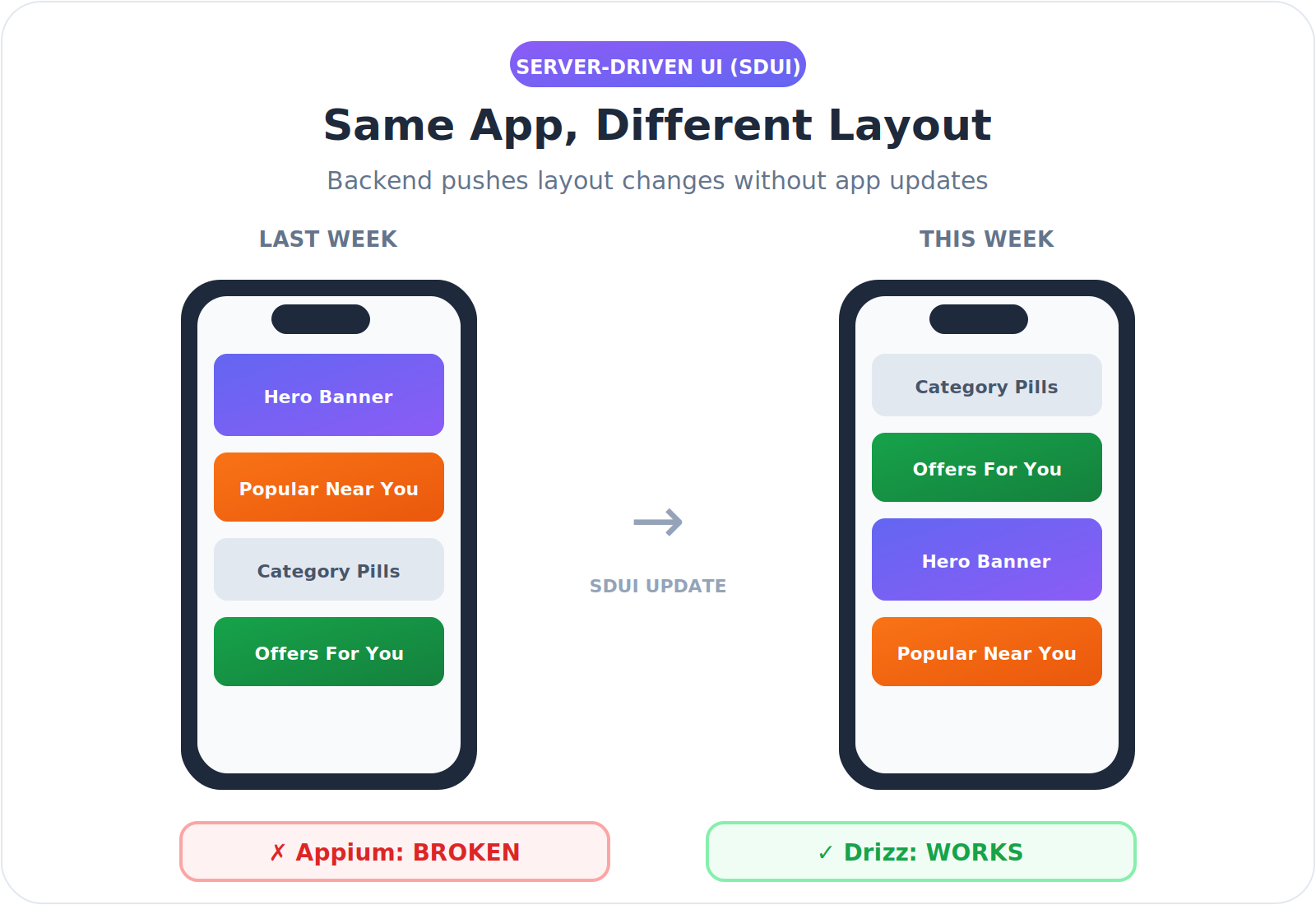

3. Server-Driven UI (SDUI)

This is the big one and it's growing fast.

SDUI flips the traditional mobile architecture on its head. Instead of hardcoding UI layouts in your app, the backend sends JSON configurations that tell the app what to render. The app becomes a rendering engine; the server controls the experience.

Why teams adopt SDUI:

- Skip the app store. Push UI changes instantly without waiting for Apple/Google review cycles

- Run experiments faster. A/B test layouts without deploying new app versions

- Personalize at scale. Serve different UIs to different user segments from one codebase

- Fix bugs immediately. Patch UI issues server-side, no user update required

Who's using it: Airbnb, Lyft, Swiggy, PhonePe, and most super-apps have adopted SDUI architectures. It's becoming standard for any app that needs to iterate quickly.

The testing nightmare:

With SDUI, your element IDs, class names, and component hierarchies aren't fixed they're dynamic payloads from the server. A screen that rendered as ProductCard > Title > PriceLabel yesterday might be DynamicBlock > TextComponent > StyledPrice today.

// Monday's server response

{

"component": "ProductCard",

"children": [

{"type": "Title", "id": "product-title-001"},

{"type": "PriceLabel", "id": "price-label-001"}

]

}

// Tuesday's server response (after backend update)

{

"component": "DynamicBlock",

"children": [

{"type": "TextComponent", "id": "txt-7x9k2"},

{"type": "StyledPrice", "id": "price-exp-b"}

]

}

Same visual result. Completely different DOM structure. Every selector you wrote on Monday is broken by Tuesday.

This is why SDUI teams often give up on E2E automation entirely or accept 30%+ flakiness as "normal."

4. Real-Time Content Updates

Live data feeds, dynamic pricing, inventory status, social feeds, notification counts these all create elements that appear, disappear, or change content unpredictably. A "Low Stock" badge might appear on some product cards but not others. A promotional banner might only display during certain hours.

5. Progressive Loading and Lazy Rendering

Modern performance optimization means elements load asynchronously. A button might not exist in the DOM until the user scrolls. A modal might render with different element hierarchies depending on what content it needs to display.

Why Traditional Selectors Fail with Dynamic UIs

Selector-based testing tools (Appium, Espresso, XCUITest, Selenium) rely on finding elements through identifiers embedded in the code:

The Core Problem: Selectors Are Code-Dependent

Selectors work by matching patterns in the underlying code structure. When that structure changes even if the visual appearance remains identical the selector breaks.

Consider this scenario:

Before A/B Test:

<Button id="checkout-v1" class="primary-cta">

<Text>Checkout</Text>

</Button>

After A/B Test (Variant B):

<TouchableOpacity testID="checkout-experiment-b" style={styles.newCta}>

<Label>Checkout</Label>

</TouchableOpacity>

To a user, nothing changed. The button says "Checkout" and works the same way. But every selector targeting the original element now fails.

What Traditional Platforms Lack

Let's break down the specific limitations of Appium, Espresso, and other selector-based tools when dealing with dynamic UIs:

1. No Visual Understanding

Traditional tools are blind. They parse the DOM tree looking for matching attributes, but they have zero understanding of what's actually rendered on screen.

// Appium sees this:

{

"element": "XCUIElementTypeButton",

"identifier": "checkout-btn-exp-b",

"frame": {"x": 120, "y": 450, "width": 200, "height": 48}

}

// Appium does NOT see:

// - A green button with white text saying "Checkout"

// - Positioned at the bottom of a cart summary

// - With a shopping cart icon on the left

This means if the identifier changes but the button looks identical, the test fails. And if the button looks completely different but keeps the same identifier, the test passes missing visual bugs entirely.

2. No Semantic Context

Selectors match strings, not meaning. They don't understand that "Checkout," "Complete Purchase," and "Place Order" all represent the same user action.

# This test breaks if copy changes

driver.find_element(By.XPATH, "//button[text()='Checkout']")

# Marketing changes button to "Complete Purchase"

# Test fails. Not a bug. Just a copy change.

Traditional tools can't adapt because they don't understand intent only exact matches.

3. No Layout Awareness

When elements move, selectors break. XPath in particular encodes positional assumptions:

// "Find the second button inside the third div"

//div[3]/div/button[2]

// Designer reorders the layout

// Same button, same function, new position

// Test fails.

Tools like Appium have no concept of "the checkout button at the bottom of the screen." They only know DOM paths that become invalid when layouts shift.

4. No Cross-Variant Intelligence

A/B testing tools serve different UI variants to different users. Traditional test frameworks have no mechanism to handle this gracefully:

// Variant A: Uses React component

driver.findElement(By.id("react-checkout-btn"));

// Variant B: Uses native component

driver.findElement(By.id("native-checkout-cta"));

// Which one? You need separate tests, variant detection logic,

// or you accept random failures when CI hits the "wrong" variant.

Most teams end up with brittle workarounds: try-catch blocks, multiple selector fallbacks, or separate test suites per variant. All of these increase maintenance burden.

5. No Self-Healing That Actually Works

Some tools claim "self-healing" capabilities—they try alternative selectors when the primary one fails. But this approach has fundamental limits:

- It's reactive, not proactive. Tests still fail first, then attempt recovery.

- It can't handle structural changes. If the element moved to a different branch of the DOM tree, fallback selectors won't find it.

- It creates false confidence. A "healed" test might now be clicking the wrong element entirely.

// "Self-healing" found a new selector

// Original: #checkout-btn

// Healed to: .btn-primary (first match)

//

// Problem: .btn-primary now matches the "Cancel" button

// Test passes. Logic is completely wrong.

True self-healing requires understanding what the element is, not just finding something with similar attributes.

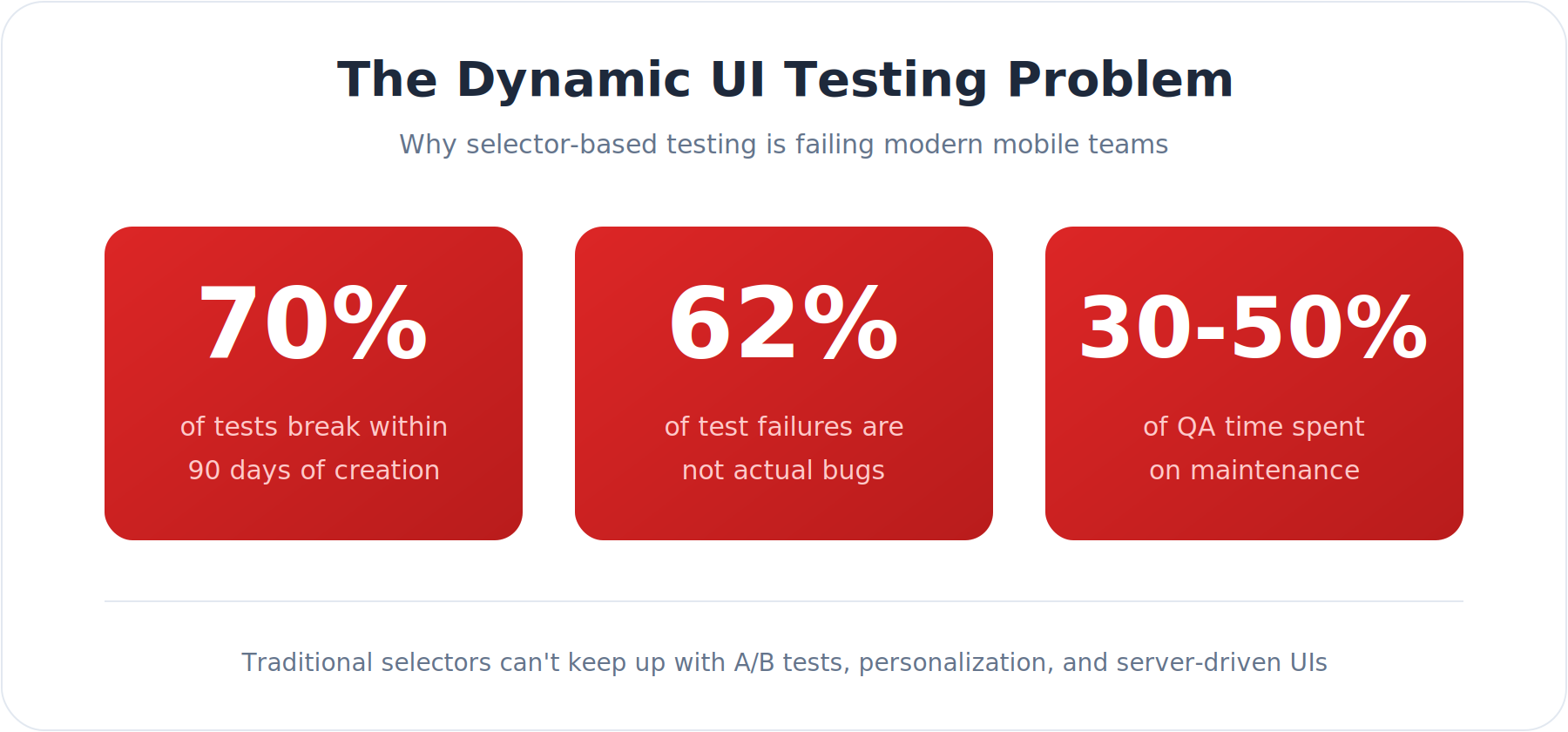

The Flakiness Epidemic

Industry data reveals the scope of this problem:

- Google found that approximately 16% of their tests are flaky, with 1 in 7 tests failing in ways unrelated to actual code changes (John Micco, Google Testing Blog, 2016)

- Traditional test automation teams spend 60–80% of their time on maintenance rather than new coverage (Virtuoso QA, Aqua Cloud industry benchmarks)

- 59% of software developers deal with flaky tests on a monthly, weekly, or daily basis (ACM Transactions on Software Engineering and Methodology, 2021)

- At scale, even small flake rates compound: Uber reported 1,000+ flaky tests in a single Go monorepo, meaning ~63% of PRs needed at least one rerun to clear false failures (Trunk.io analysis of 20.2M CI jobs)

- Google estimated flaky tests cost them over 2% of total coding time for a 50-person team, that's one full-time engineer lost entirely to chasing phantom failures (Google Engineering Productivity Research)

For teams running A/B tests or personalization, these numbers are even worse. Each new experiment multiplies the surface area for selector failures.

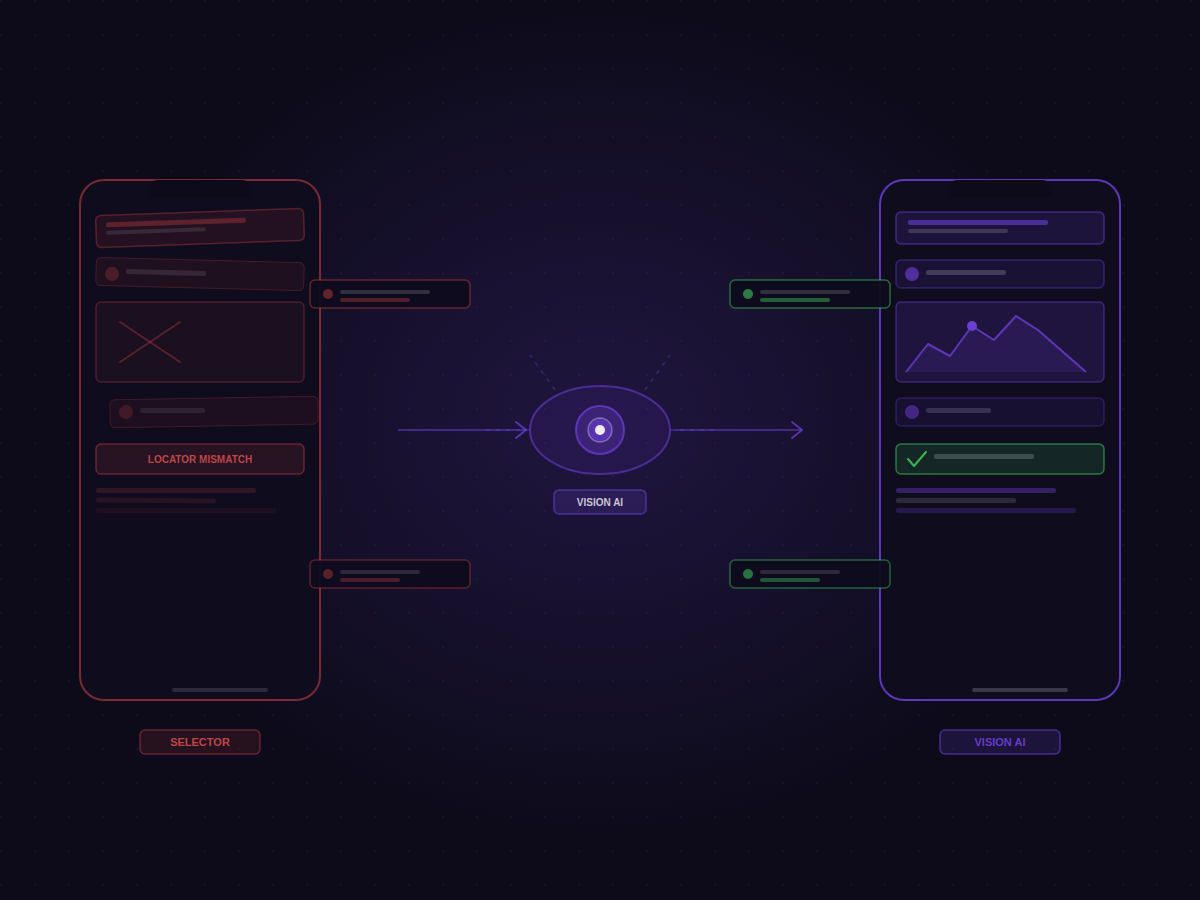

How Vision AI Solves the Dynamic UI Problem

Drizz takes a fundamentally different approach. Instead of searching for elements by their code identifiers, Drizz sees the screen the way a human does.

The Human Approach to Testing

Think about how a human tester handles a dynamic UI:

They look at the screen, identify the button labeled "Checkout," and tap it. They don't care if the underlying component is a <Button> or <TouchableOpacity>. They don't check the CSS class name. They simply see a button with text and tap it.

Drizz replicates this by treating every test step as a visual problem, not a code-lookup problem. At each step, it captures a screenshot and passes it through a computer vision model trained specifically on UI patterns: buttons, input fields, modals, navigation elements, loading states. The model returns a semantic understanding of what's on screen: not coordinates, not DOM attributes, but something closer to "there's a primary CTA in the lower-right area labeled Checkout."

Your test command: "tap the Checkout button", is matched against that semantic map. Which means it doesn't matter if the underlying component is a <Button>, a <TouchableOpacity>, or something your SDUI server rendered at runtime. If it looks like a checkout button, Drizz finds it.

1. Visual Element Recognition

Drizz analyzes screenshots using computer vision to understand what's actually on screen:

- Identifies buttons, text fields, images, and interactive elements by their visual appearance

- Recognizes text content regardless of the underlying rendering component

- Understands spatial relationships between elements

- Detects element states (enabled, disabled, selected, loading)

2. Natural Language Test Commands

Write tests the way you'd describe them to a human:

Tap the "Add to Cart" button

Verify the cart shows 3 items

Scroll until you see "Checkout"

Enter "test@email.com" in the email field

No selectors. No XPath. No framework-specific syntax.

3. Context-Aware Element Matching

When the UI changes, Drizz finds elements by visual context rather than code identifiers:

4. Self-Healing Without Maintenance

Traditional "self-healing" tools attempt to fix broken selectors by trying alternative attributes. This works for minor changes but fails when components are fundamentally restructured.

Drizz doesn't need to heal selectors because it never relies on them. The visual appearance of "Checkout button in the bottom right" remains stable even when the underlying code changes completely

Real-World Dynamic UI Scenarios

Let's examine how Drizz handles specific dynamic UI challenges:

Scenario 1: E-commerce A/B Test

An e-commerce team is testing two checkout flows:

- Control: Traditional multi-step checkout

- Variant: Single-page checkout with accordion sections

Traditional Testing Approach:

- Maintain two separate test suites

- Use feature flags to route tests to correct variant

- Update both suites when either changes

- Debug failures to determine if they're real bugs or variant mismatches

Drizz Approach:

Navigate to cart

Tap "Proceed to Checkout"

Enter shipping address

Enter payment information

Tap "Place Order"

Verify order confirmation appears

The same test works for both variants. Drizz finds the appropriate elements in each layout without modification.

Scenario 2: Personalized Homepage

A fintech app displays different dashboard layouts based on account type:

- Basic users: Simplified view with account balance and recent transactions

- Premium users: Full dashboard with investments, insights, and quick actions

- Business users: Multi-account overview with team management

Traditional Testing Approach:

- Create separate test suites for each user type

- Maintain three sets of selectors

- Update all three when shared components change

- Risk missing bugs that only appear in specific user contexts

Drizz Approach:

Login as [user_type] user

Verify dashboard displays account balance

Verify recent transactions are visible

If premium user, verify investment summary appears

If business user, verify team accounts are listed

Drizz adapts to whatever layout appears, validating the appropriate elements for each user context.

Scenario 3: Server-Driven UI Updates

A content app uses SDUI to push layout changes without app updates. The marketing team frequently rearranges content blocks, changes promotional banners, and experiments with navigation patterns.

Traditional Testing Approach:

- Tests break after every SDUI update

- QA team spends days updating selectors

- Regression testing backlog grows

- Teams stop running tests or accept high failure rates

Drizz Approach:

- Tests continue working regardless of layout changes

- Drizz validates content is visible and interactive

- Visual bugs (overlapping elements, truncated text) are caught

- QA team focuses on new coverage instead of maintenance

Implementing Vision AI Testing for Dynamic UIs

Most teams try to migrate their entire test suite at once. Don't. Full migrations stall the whole testing process due to too much parallel running, too many stakeholders, too much pressure to just make the CI green again.

Start with your three highest-churn tests instead. When vision-based testing solves your worst problems without maintenance, the rest of the case makes itself. Here's how the full migration typically looks:

Week 1: Identify High-Churn Tests

Start by identifying tests that break most frequently due to UI changes:

- Tests tied to A/B experiments

- Tests covering personalized experiences

- Tests for frequently updated screens

- Tests with the highest maintenance burden

These are your candidates for migration to Drizz.

Week 2-3: Parallel Execution

Run Drizz tests alongside existing selector-based tests:

- Compare pass/fail rates

- Measure time spent on maintenance

- Identify bugs Drizz catches that selectors miss

- Document false positive rates for each approach

Week 4: Gradual Migration

Begin replacing high-maintenance selector tests with vision-based equivalents:

- Prioritize tests that break with every A/B experiment

- Focus on personalized user journeys

- Target SDUI-dependent screens

.png)

Ongoing: Expand Coverage

As confidence grows, expand vision testing to:

- All critical user flows

- Cross-platform consistency validation

- Visual regression detection

- Accessibility compliance verification

The Business Case for Vision AI Testing

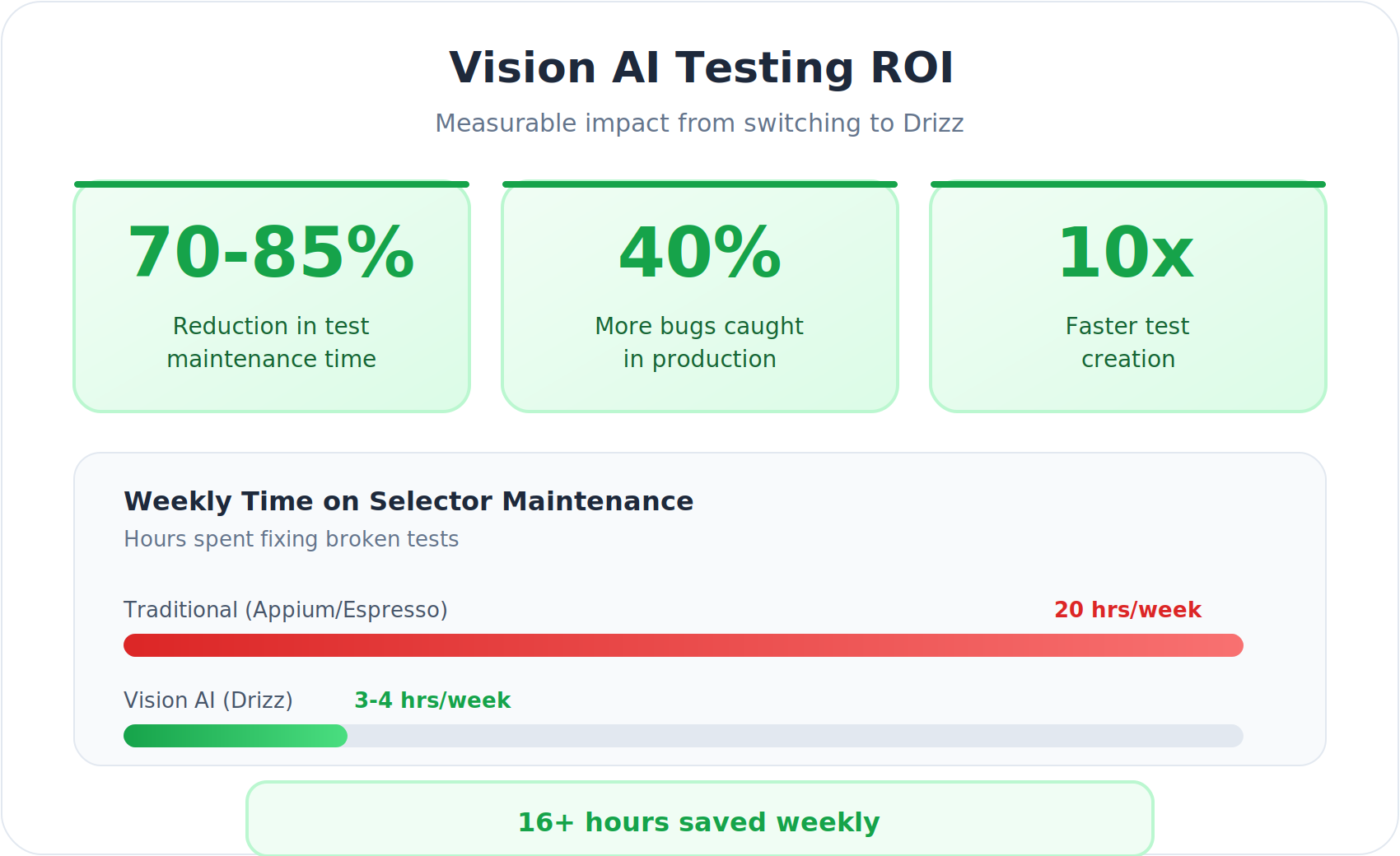

For engineering leaders evaluating Drizz, the ROI centers on three metrics:

1. Maintenance Time Reduction

Teams report 70-85% reduction in test maintenance time after adopting vision-based testing. For example, a fintech team running 400+ UI tests across personalized dashboards reduced their maintenance sprint from 3 days to 4 hours after migrating their highest-churn flows to Drizz. Their selectors broke after every biweekly release cycle; with vision-based testing, the same test suite ran green through 12 consecutive releases without a single maintenance touch. For a team spending 20 hours per week on selector fixes, that kind of reduction translates to 14-17 hours returned to productive work time that goes back into writing new coverage or shipping features.

2. Experimentation Velocity

When tests don't break with every A/B experiment, product teams can iterate faster:

- No QA bottleneck before launching experiments

- Faster feedback on experiment results

- More experiments run per quarter

- Better data for product decisions

3. Bug Detection Rate

Vision AI catches issues selectors miss entirely:

- Layout bugs from A/B variant conflicts

- Visual regressions from personalization logic

- SDUI rendering errors

- Cross-platform inconsistencies

Teams report 40% more production bugs caught in the first quarter of adoption.

When Selector-Based Testing Still Works

To be balanced, selector-based testing remains appropriate for:

- Unit-level component testing with stable interfaces

- API contract testing where visual representation doesn't matter

- Performance testing focused on response times

- Legacy apps with frozen UIs and no experimentation

However, for any app with dynamic content, personalization, A/B testing, or server-driven interfaces, vision-based testing delivers superior results with dramatically lower maintenance.

Vision-based testing has its own scenarios that require more care. UIs with multiple visually identical elements: "Add to Cart" appearing on every card in a 20-item grid, need slightly more spatial context in the test command: "tap the Add to Cart button on the first product card" rather than just "tap Add to Cart." The same applies to icon-only interactive elements, heavily animated transitions, and interfaces that change dramatically between light and dark mode. These aren't blockers, but they do mean your test commands need to be written with a bit more precision in those specific cases.

The honest summary: neither approach is universal. Selector-based testing is reliable when your UI is stable and your codebase is frozen. Vision-based testing is the better default when your product is actively evolving, which, for most teams, is most of the time.

Conclusion

Dynamic UIs are the new normal. A/B testing, personalization, server-driven interfaces, and real-time content updates aren't going away they're accelerating.

The testing industry's response has been band-aids: self-healing selectors, smarter locator strategies, better Page Object patterns. These help, but they don't solve the fundamental problem. Selectors are tied to code. Code changes. Tests break.

Drizz's Vision AI represents a paradigm shift. By testing what users actually see rather than what developers write, vision-based testing finally decouples test stability from code volatility.

If your team runs A/B experiments or maintains a personalized UI, the best way to see the difference is to import one of your flakiest test flows into Drizz and run it. Most teams identify 3–5 test failures that aren't real bugs within the first hour. Book a Demo

Test like humans see.